Empower your generative AI application with a comprehensive custom observability solution

AWS Machine Learning - AI

OCTOBER 29, 2024

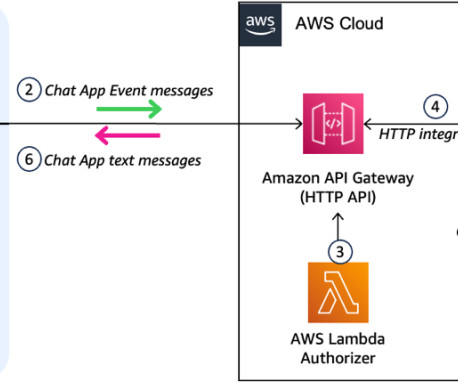

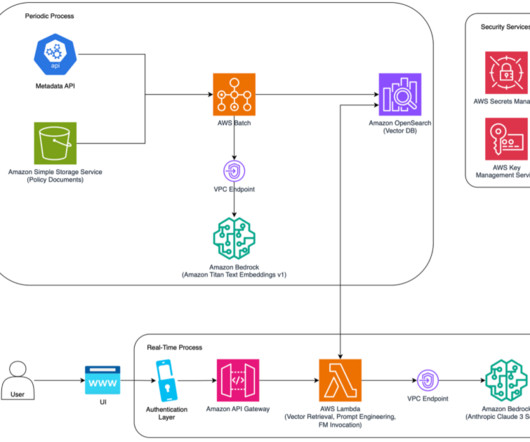

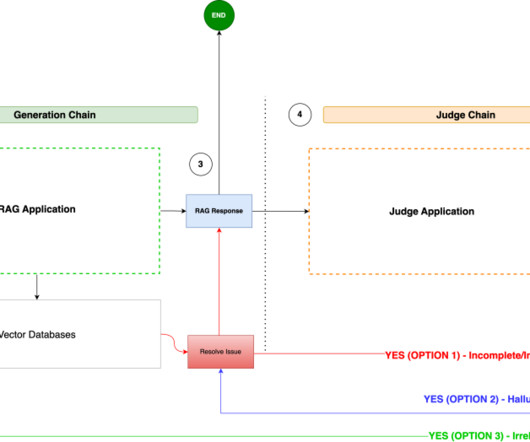

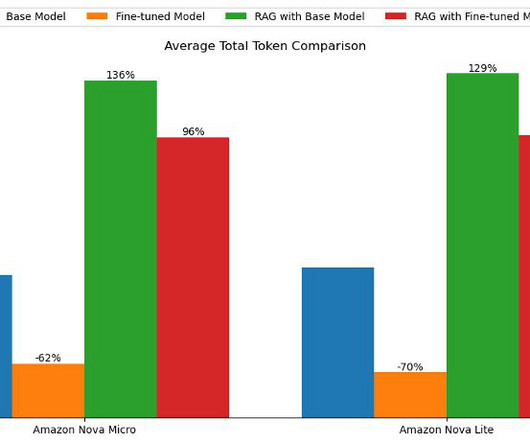

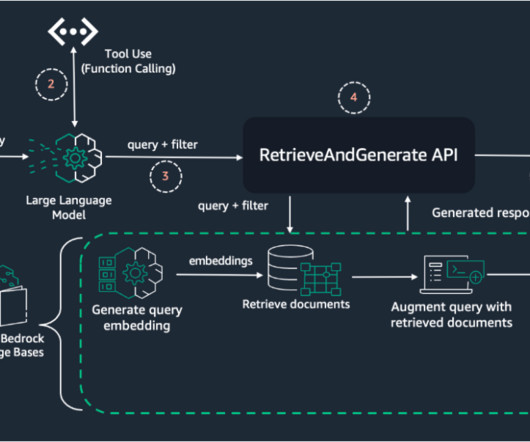

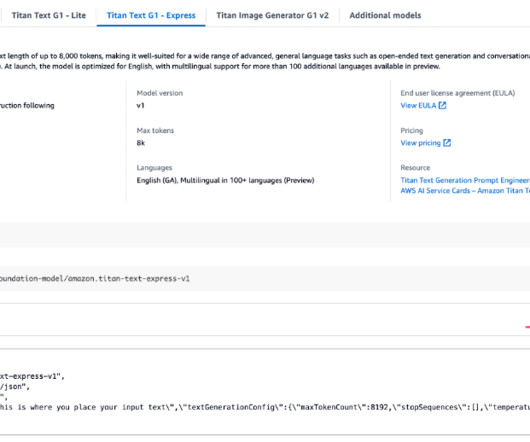

Recently, we’ve been witnessing the rapid development and evolution of generative AI applications, with observability and evaluation emerging as critical aspects for developers, data scientists, and stakeholders. In the context of Amazon Bedrock , observability and evaluation become even more crucial.

Let's personalize your content