Amazon Q Business simplifies integration of enterprise knowledge bases at scale

AWS Machine Learning - AI

FEBRUARY 11, 2025

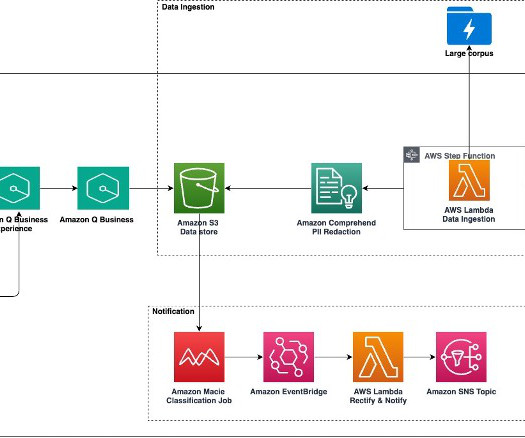

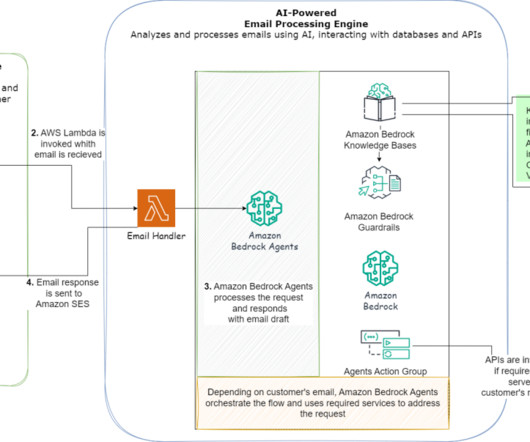

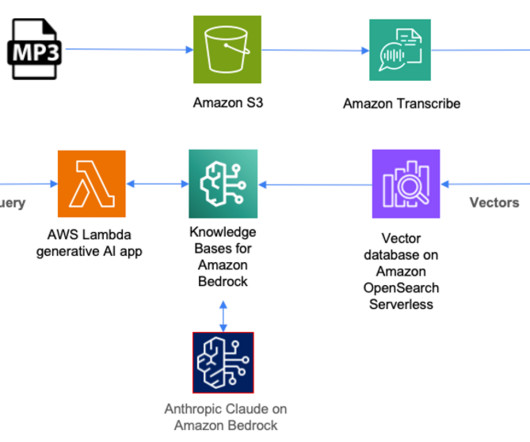

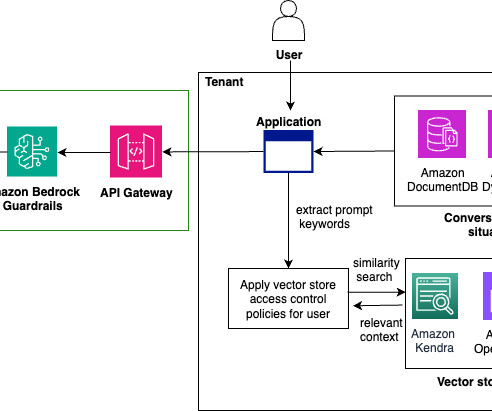

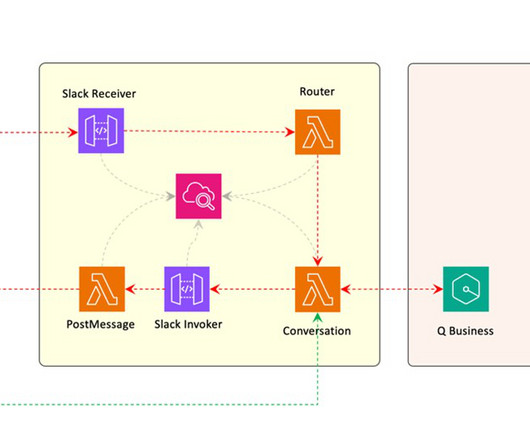

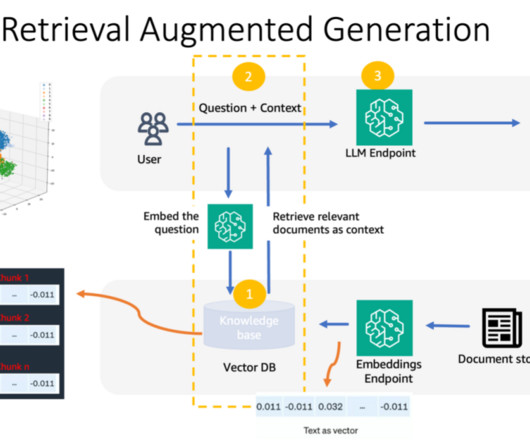

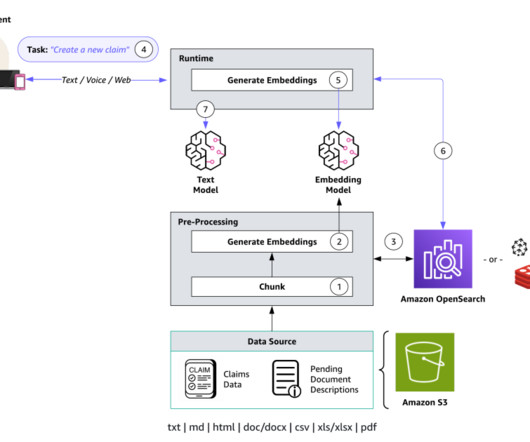

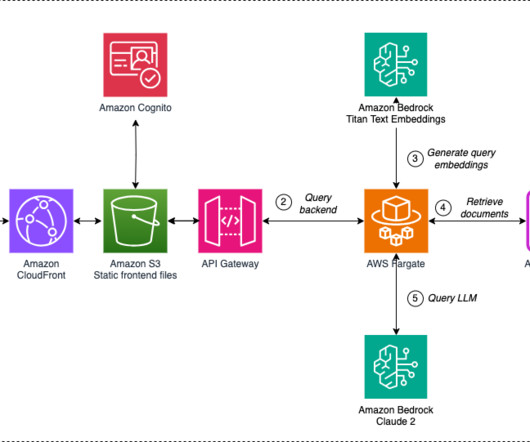

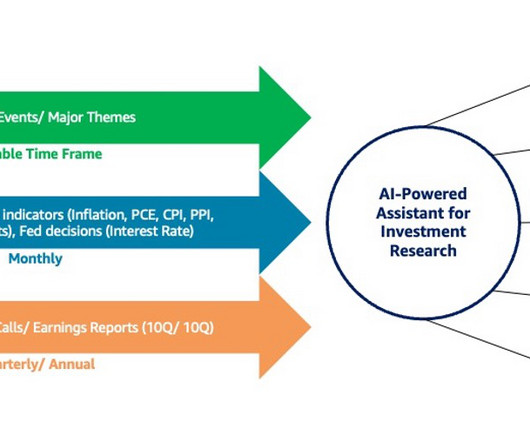

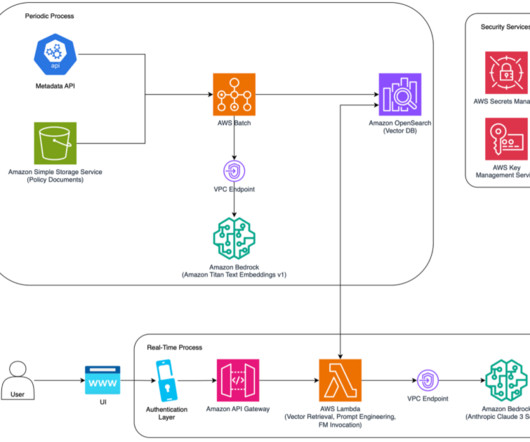

However, ingesting large volumes of enterprise data poses significant challenges, particularly in orchestrating workflows to gather data from diverse sources. In this post, we propose an end-to-end solution using Amazon Q Business to simplify integration of enterprise knowledge bases at scale.

Let's personalize your content